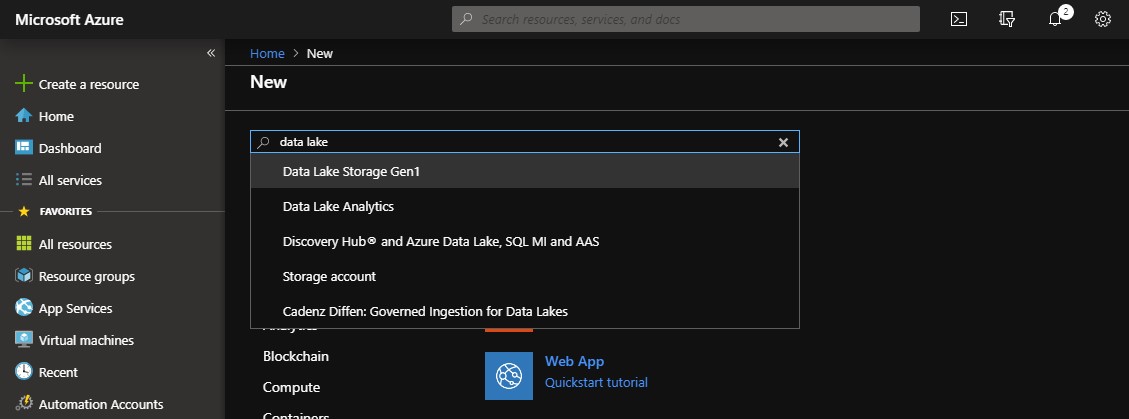

Using Azure Data Lake to Archive, Audit and Analyse Log Files

When operating relatively big and complex environments, the ability to have all the operational information available as quick as possible is one of the key factors that protect you from downtime and breached SLAs and allow you to have a full view on the environment to act proactively. There are many cloud and on premises solutions that can be of assistance but there are some cases that require a more customized approach. Don't get me wrong, Azure OMS and other solutions like it are great for maintaining the control and reporting on your services. However, there are some organizations with needs that cannot be covered by OMS, such as really long retention periods, log file formats that cannot be directly parsed, etc. So what we need is a place to store the files and a very fast way to query them. This is where Azure Data Lake comes into play. Uploading your log files to Azure Data Lake or directly feeding the Data Lake using Azure Stream Analytics will give you the ability...