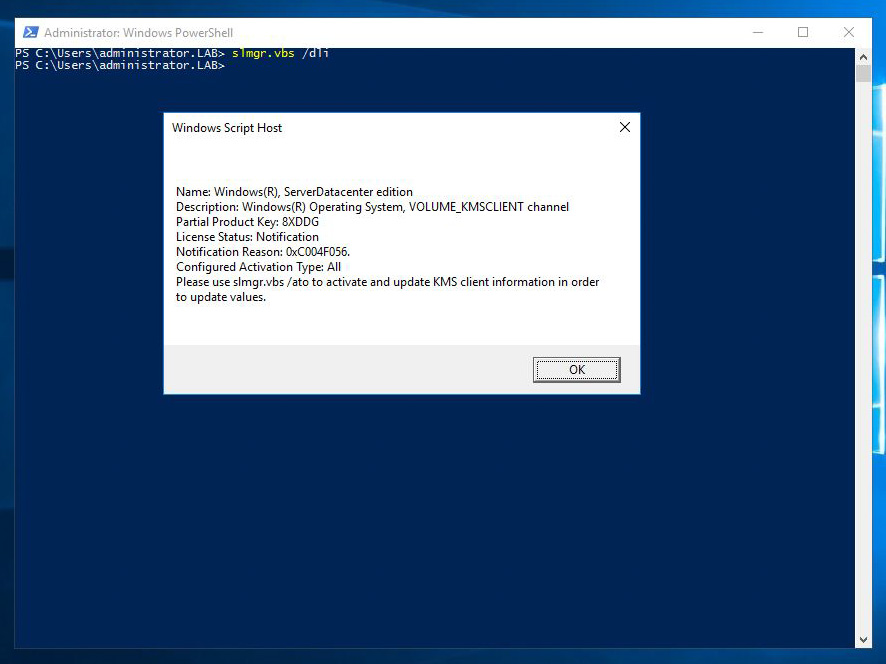

Updating the product key and activating a Windows Server installation

A very common task when installing a Windows operating system is to activate the license, and update the product key if required. Although this is a very simple task using the control panel, it can be tricky the command line is all you've got, just like on server core installations. The slmgr script can provide information about the license status of a server, update the product key and activate the license. Let's take a look at the parameters of the script. To get a short description of the licensing status, use the "/dli" parameter: If you need a more detailed status, you can use the "/dlv" parameter: The "/xpr" parameter displays the version of the server and the status of the activation: The "/ipk" parameter allows us to change the product key of the server: After changing the product key, we have to activate the license using the "/ato" parameter: After updating the product key...