Creating Unique Names for Resources in Bicep

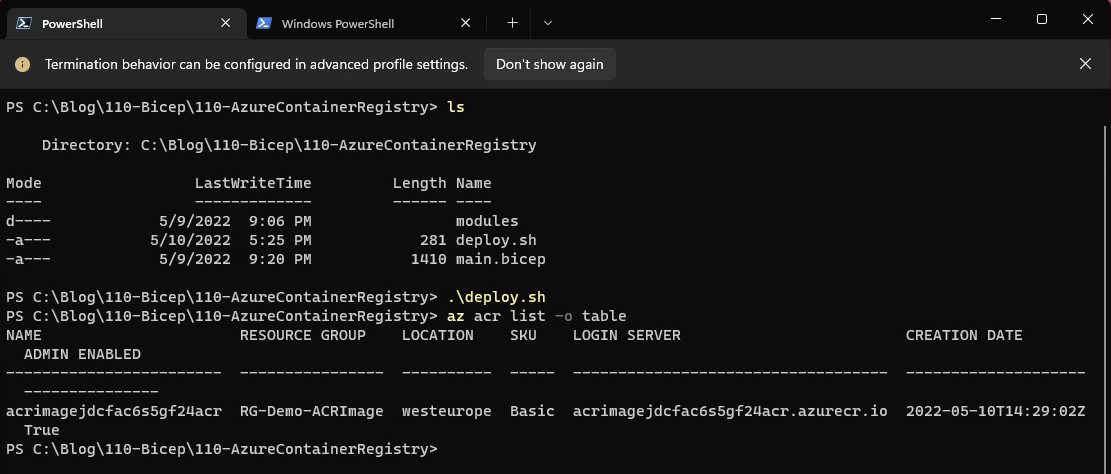

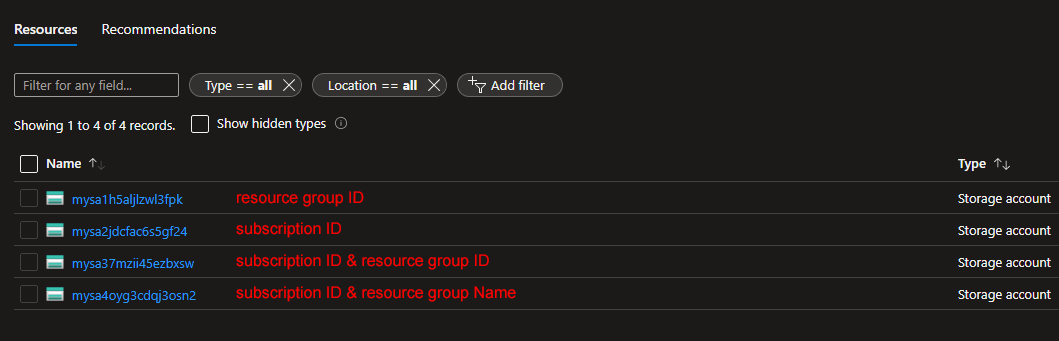

When creating resources on Azure, there are times that the deployment fails due to the name selected for the resource. This is primarily due to the fact that there are some resources that are available as services and exposed to the internet, thus their name should be unique across deployments. Take a storage account for example. The name should not only be unique but also in lowercase and under 25 characters! A virtual machine, on the other hand, does not require a unique name (at a global level). How do we tackle such requirements? The Bicep uniqueString function is here to help. This particular function takes a number of string parameters and creates a unique string. Combined with scope functions like subscription and resourceGroup , you may generate strings unique to your environment. Other string functions like toLower and substring can be used to make your code even more robust. Let's dive into some examples! The below code is part of a bicep file that deploys ...